The next wave of AI is here. Upwind becomes Agentic.

One of the most fascinating technologies I’ve encountered in my personal life over the past few years is autonomous driving. It started as a curiosity, “can my car really drive itself?” Can it actually make decisions with enough necessary context, and not rely solely on static things it sees like trees and roads? Can it react to the real-time layers unfolding around it: pedestrians walking, cyclists nearby, cars moving fast, and ever-changing road and weather conditions? That’s a lot to take in.

Over time, what began as pure tech curiosity, “how does this thing actually work?” became a realization: a 9-camera car powered by a neural network is better positioned to drive than I am. It isn’t distracted by the kids in the back. It isn’t replaying my last meeting or thinking about my next one.

That was the first time I realized how much we can gain by feeding context to AI and letting it make decisions on our behalf, even when our lives depend on it.

This wasn’t an “aha” moment that had us shipping something over a weekend. This project was personal to us. It’s a conviction we’ve carried for the past 18 months. We wanted to do it right.

Today, I’m beyond excited to announce one of the biggest releases in Upwind’s history – Upwind Agentic Pack.

We’ve spent the last year and a half training our models, tuning our data, and assembling the context we need to help machines make sense of billions of data points, across zero-day attacks, active incidents, emerging threats, and the proactive work of fixing and preventing security weaknesses at cloud and enterprise scale.

The Upwind Agentic Pack

We went back to the drawing board and asked ourselves: what are the most complicated, time-consuming tasks we could take off our customers’ plates? What do customers actually do when they receive a signal from Upwind? Those signals span across three areas:

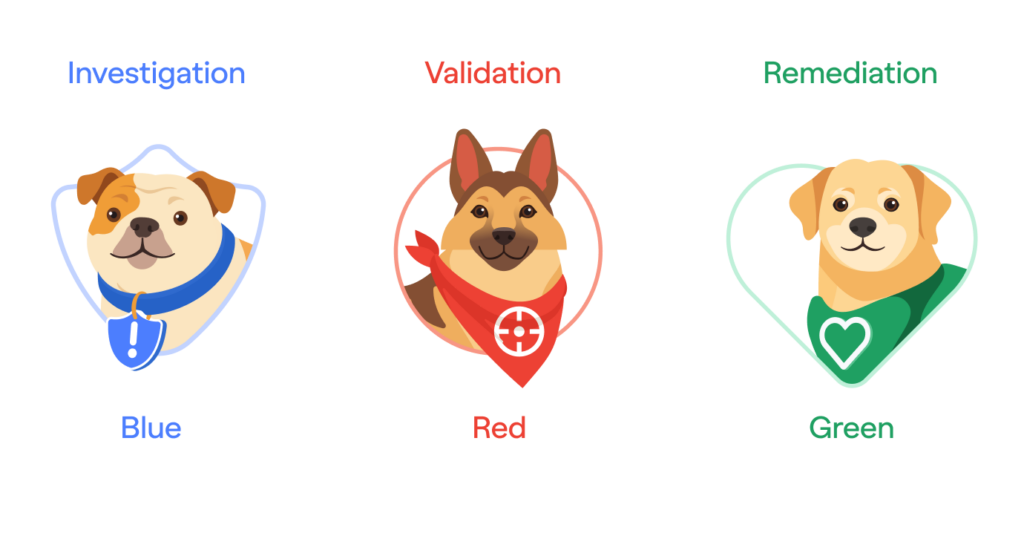

Investigation

When a threat pops up, or a zero-day strikes, customers need to investigate immediately — this means connecting the dots across dozens of signals from runtime and cloud logs, inventory, SBOMs, and data discovery. Customers need to move fast, without distraction, and they need accurate, distilled information so they can report up and start acting on it.

Validation

When a security “issue” or “finding” surfaces, a new resource carrying a critical vulnerability and sensitive data, or a new external exposure happens (for example, an S3 bucket or API endpoint that shouldn’t be public), customers need to validate it in context. Most importantly, they need to confirm it’s a real problem, that the exposure is genuine, and that the attack surface is real.

Remediation

A security professional’s everyday work is to ensure tomorrow’s cloud is more secure than today’s. They do this by building proactive projects, guardrails, and putting policies in place that make every release safer than the last. And this is the hardest, most time-consuming work of all. To do it, they need to solve the cloud problem itself: understanding the wiring from Git to production, at the pace of their engineering team, within the standards and infrastructure of their DevOps team. That’s a lot to make sense of.

Meet the Blue, Red and Green AI Agents.

That’s why today we’re launching three AI agents. Each is specialized to take on one of the biggest challenges in cloud and AI security: Meet the Upwind Blue, Red, and Green agents.

We’ve humanized the pack, too. They’re dog-agents, not robot-agents. Humanity’s best friends.

We’re just getting started here. Autonomous cars and AI security agents are solving the same problems just in different quantum worlds. Both operate on our behalf. Both depend on “cameras” to see. Both need full context, static data, but also dynamic realtime data. Give them the right “eyes” and the right context, and eventually they’ll see clearer, decide faster, and act more accurately than any human.

Cyber defense is leveling up. This is the next wave – Agentic Cloud Security.

Up & Up,

Amiram.